I think it is comma delimited, and text is apparently delimited with doublequote. The clientdata.csv file contains 2593 lines, and 570 records. I can load the file into OpenOffice without a problem. I think the problem is that the text blurbs contain more than one line, and MySQL is parsing the file as if each new line would correspond to one databazse row. Records: 2023 Deleted: 0 Skipped: 0 Warnings: 198256 Query OK, 2023 rows affected, 65535 warnings (0.08 sec) I tried to load data into the file: LOAD DATA INFILE '/home/paul/clientdata.csv' INTO TABLE CSVImport No constraints are on the table, and all the fields hold VARCHAR(256) values, except the columns which contain counts (represented by INT), yes/no (represented by BIT), prices (represented by DECIMAL), and text blurbs (represented by TEXT). The CSV contains 99 columns, so this was a hard enough task in itself: CREATE TABLE 'CSVImport' (id INT) ĪLTER TABLE CSVImport ADD COLUMN Title VARCHAR(256) ĪLTER TABLE CSVImport ADD COLUMN Company VARCHAR(256) ĪLTER TABLE CSVImport ADD COLUMN NumTickets VARCHAR(256) ĪLTER TABLE CSVImport Date49 ADD COLUMN Date49 VARCHAR(256) ĪLTER TABLE CSVImport Date50 ADD COLUMN Date50 VARCHAR(256) I created a table called 'CSVImport' that has one field for every column of the CSV file. print ('select * from ' + ('Table created: ' + how you should call the Proc.I have an unnormalized events-diary CSV from a client that I'm trying to load into a MySQL table so that I can refactor into a sane format. Join ShadowMapTable t3 on (t1.name = t3.inCsv )ĭrop table ' + + ' into ' + + ' from ' + + ' ' Where inCsv = t1.name, t3.inCsv, t3.inSql Where t2.name = Define the ColumnListRenamed -> used in the into clause. Select = t1.name, = t1.name + ' as ' + t3.inSql Join ShadowMapTable t3 on (t1.name = t3.inCsv) Join sys.tables t2 on (t1.object_id = t2.object_id)

Select * into ShadowMapTable from mapTable

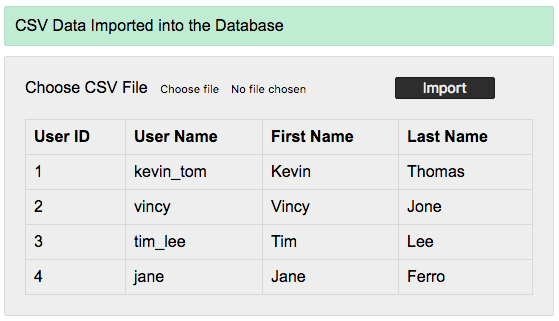

If ((select OBJECT_ID('ShadowMapTable')) > 0 ) CREATE PROCEDURE varchar(max)ĭECLARE varchar(max) = varchar(max) = varchar(max) = TinyInt = varchar(max) = varchar(max) = varchar(max) = '' Insert into csvImported values (101, 1252, 33213) Insert into csvImported values (110, 1422, 37833) Populating the csvImported table insert into csvImported values (11, 122, 333) Insert into mapTable values ('Name3', 'ID3')

Insert into mapTable values ('Name2', 'ID2') Populating the mapTable table insert into mapTable values ('Name1', 'ID') So, im populating the csvImported table with some stuff, just to test. Here, im supposing you already have a table with your csv data imported. SET = N'CREATE TABLE #data = + N'BULK INSERT #data FROM ' + + ' WITH (DATAFILETYPE = ''char'',FIELDTERMINATOR = '','',ROWTERMINATOR = ''0x0D0A'',FIRSTROW = 2) 'ĮXEC sp_executesql table structs used: create table mapTable( SELECT = + QUOTENAME( + ' AS ' + QUOTENAME() FROM MyMetadata ORDER BY SET = N'BULK INSERT #header FROM ' + + ' WITH (DATAFILETYPE = ''char'',FIELDTERMINATOR = '','',ROWTERMINATOR = ''0x0D0A'',FIRSTROW = 1, LASTROW = 1) 'ĮXEC sp_executesql = + QUOTENAME() + ' int' FROM #header UNPIVOT( FOR IN (,)) p ORDER BY CREATE PROCEDURE Import varchar(max)ĬREATE TABLE #header ( sysname, sysname, sysname) If your needs are more complex, you might start looking at a dedicated ETL tool. This makes a few assumptions: always 3 columns, all data is type int, destination table is static, etc.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed